Content to follow.

Section 2

Approach & Methodology

Gate

Gate

Intake

Engine

Readiness

Pack

Across Use Cases

Capability Maturity

Gap Analysis

Roadmapping

Introduction

Most organisations struggle with AI adoption not because the technology fails, but because they build the wrong things, in the wrong order, without knowing what's already available. The result is a graveyard of disconnected proofs of concept — each one built in isolation, none of them connecting to shared infrastructure, and no discipline for stopping work that isn't delivering value.

At the same time, many organisations struggle to translate the unconstrained potential of AI — what the technology could do — into solutions that can operate within the constraints of an enterprise environment — security, governance, data access, and operational support.

We take a different approach. Our model explicitly bridges these two worlds:

- exploring AI capabilities without artificial constraint during ideation and experimentation

- then systematically shaping them into solutions that are secure, governed, and scalable in production

Our end-to-end AI delivery model is structured, artefact-driven, and designed to move consistently from idea to production — while building reusable, enterprise-grade AI capabilities that make every subsequent use case cheaper and faster to deliver.

The model operates on two layers:

- The Use Case Layer (Steps 0-5) governs how each individual idea is discovered, validated, and prepared for build. It answers the question: is this worth building, and how should we build it?

- The Portfolio Layer governs what to build and when across the entire estate. It answers the question: given everything we know about demand, capability, and infrastructure — what is the most valuable thing to invest in next?

These layers run concurrently. The portfolio view continuously reprioritises as new use cases are submitted, experiments produce evidence, and capabilities are built.

Step 0: Human-Led Discovery & Framing

We start with the real problem, not just the idea.

Before any formal intake, we conduct targeted stakeholder conversations to understand the operational context behind each AI opportunity. This is deliberate: the best use cases come from people who understand the problem deeply but may not frame it in AI terms.

In these conversations, we:

- Understand the operational context and the true business problem — not just the surface-level request

- Capture jobs-to-be-done, pains, and desired outcomes from the people who live with the problem daily

- Identify constraints early: data availability, system dependencies, regulatory considerations, organisational readiness

- Surface existing solutions and prior efforts — what's already been tried, what worked, what didn't

This ensures every use case that enters the pipeline is grounded in real user needs and operational reality, not abstract ideas or technology-first thinking. It also builds the relationship between the AI Lab and the business units it serves — the Lab is a partner in solving problems, not a ticket queue.

The output of this step is a clear understanding of the opportunity, ready to be structured through the formal intake process.

Step 1: Use Case Intake

We create clarity from day one.

All use cases — whether newly discovered or pre-existing — enter through a single, governed front door. This is a deliberate design choice. A single intake point standardises inputs across the organisation, ensures every opportunity is assessed on the same basis, prevents duplication of effort, and provides full visibility of the pipeline.

Each use case is systematically captured across five pillars:

| Pillar | What It Captures | Why It Matters |

|---|---|---|

| Value | Problem statement, business impact, strategic alignment, scale and frequency of the problem | Ensures we're solving problems worth solving |

| Understanding | Current process maturity, workflow clarity, whether success metrics are defined | Reveals whether the problem is well-enough understood to act on |

| Data | Data types needed, where data lives, data quality, sensitivity level | Determines what's technically feasible and what governance is required |

| Capability | AI pattern required (classification, RAG, forecasting, etc.), platform capabilities needed, integration requirements | Maps the use case to the technical capabilities it demands |

| Readiness | Infrastructure readiness, governance requirements, team capability, blockers and dependencies | Shows whether the organisation is ready to support this use case |

The Front Door is initially human-led: the AI Lab team works directly with business users to capture and structure each opportunity. Over time, this evolves into a self-serve portal where business users can submit use cases directly, guided by an AI-assisted intake process that asks the right questions and ensures completeness.

Output: A standardised Use Case Card — scored across all five pillars, with a dependency category assigned, required capabilities identified, and blockers surfaced.

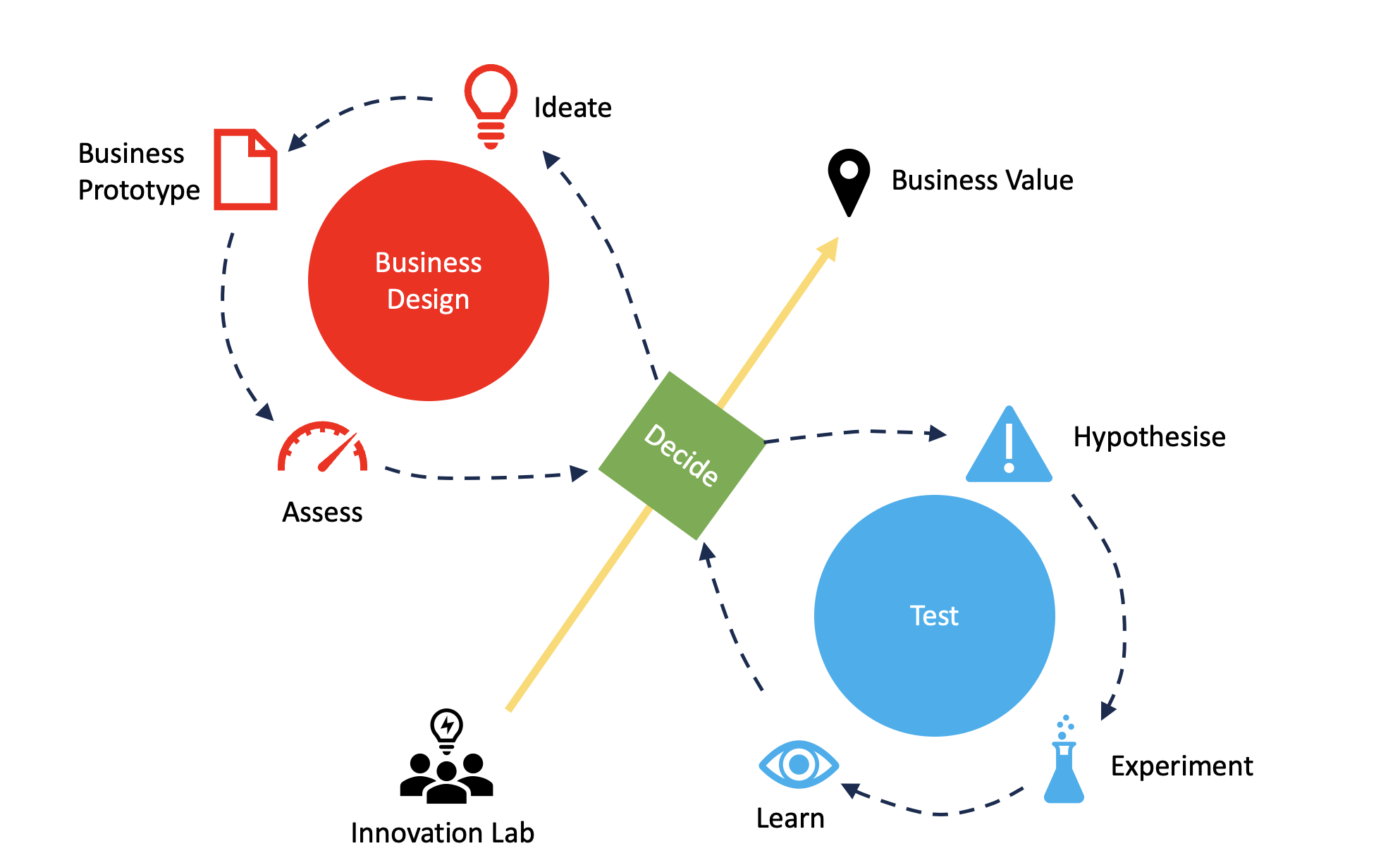

Step 2: Experiment Engine

We prove value before we build.

Prioritised use cases do not go straight to development. They enter a structured experimentation process designed to validate — or invalidate — the core assumptions before any meaningful investment is made.

This process operates as two connected cycles:

- Business Design — is this worth building?

- Test Cycle — can this actually work?

The Business Design Cycle

Ideate

We start with a clear understanding of the problem, user context, and desired outcomes — ensuring focus on real operational challenges.

Working sessions with stakeholders, domain experts, and delivery teams rapidly explore solution options. Problems are reframed into opportunity statements, AI intervention points are identified, and multiple approaches are explored in parallel using proven patterns such as RAG, classification, and workflow automation.

Ideas are quickly assessed against desirability and viability, creating a disciplined funnel from opportunity to testable concept.

Business Prototype

Promising ideas are translated into lightweight, working prototypes — not to prove technical perfection, but to test value.

Prototypes are built rapidly using reusable components aligned to the AI Capability Library (e.g. retrieval patterns, summarisation, speech interfaces), combined with representative data and simple interfaces grounded in real workflows. This allows us to assemble working solutions quickly, rather than building from first principles.

Development is strictly time-boxed to maintain pace and avoid over-engineering.

Each prototype is designed to answer three questions:

- Does this meaningfully solve the problem?

- Is the output useful and understandable?

- Would users adopt this in practice?

Stakeholders engage directly with the prototype, enabling fast feedback, refinement, or rejection before further investment.

Assess

Each use case is evaluated across three lenses:

- Desirable — does this solve a real problem and will users adopt it?

- Viable — does this deliver measurable business value?

- Feasible — can this be built and scaled?

At this stage, desirability and viability take priority. Feasibility is not treated as a hard gate — allowing high-value opportunities to progress even if capabilities are not yet in place, and enabling informed, portfolio-level investment decisions.

The Test Cycle

Hypothesise

Each experiment is defined with precision:

- We believe that...

- To verify, we will...

- We will measure...

- We are right if...

Experiments use real data, with success criteria defined upfront.

To ensure consistency and repeatability, we apply a standardised evaluation test suite, aligned to common AI capability patterns (e.g. retrieval quality, summarisation accuracy, workflow outputs). This allows experiments to be assessed objectively, rather than relying on subjective judgement.

All results are captured in an Experimentation Log, providing a transparent, auditable record of what was tested, learned, and decided.

Experiment & Learn

Experimentation builds confidence over time — it is not a single pass/fail step.

After each experiment, confidence is updated across desirability and viability. Multiple targeted experiments are run to reduce uncertainty. Strong signals increase confidence; weak or negative signals trigger refinement or alternative approaches.

The evaluation test suite is reused and extended across experiments, ensuring results are comparable as the solution evolves.

If confidence cannot be raised to an acceptable level, the use case is stopped.

Decide

We make evidence-based decisions.

Each use case reaches a formal decision point:

- Kill — insufficient value or confidence. Work stops and investment is redirected.

- Iterate — promising but inconclusive. Refine and re-test.

- Use Case Validated — sufficient confidence to proceed.

Kill discipline is intentional. Stopping weak ideas early protects investment and prevents accumulation of low-value solutions.

The Operating Rhythm

Experimentation runs on a structured cadence to maintain pace and transparency:

- Weekly planning — define hypotheses and experiments

- Daily standups — maintain momentum and remove blockers

- Weekly learning reviews — interpret results and adjust direction

- Monthly decision forums — make investment decisions with stakeholders

This ensures continuous learning, visible progress, and shared ownership of decisions.

Step 3: Build Readiness

We formalise before we scale.

Validated use cases are translated into a Build Readiness Pack — a structured, evidence-based handoff from experimentation to delivery.

This is developed collaboratively with business, architecture, security, data protection, and operations teams to ensure alignment with enterprise standards from the outset.

The pack defines:

- the validated problem and expected value

- key learnings and recommended solution approach

- required capabilities and platform alignment

- security, architecture, and governance requirements

- MVP scope, risks, and ownership

- a clear recommendation to proceed, iterate, or stop

This ensures delivery begins with clarity, alignment, and agreed constraints — not assumptions.

Governance is by design, not added later. Existing forums (architecture, security, data protection) are used to validate decisions early, avoiding late-stage blockers.

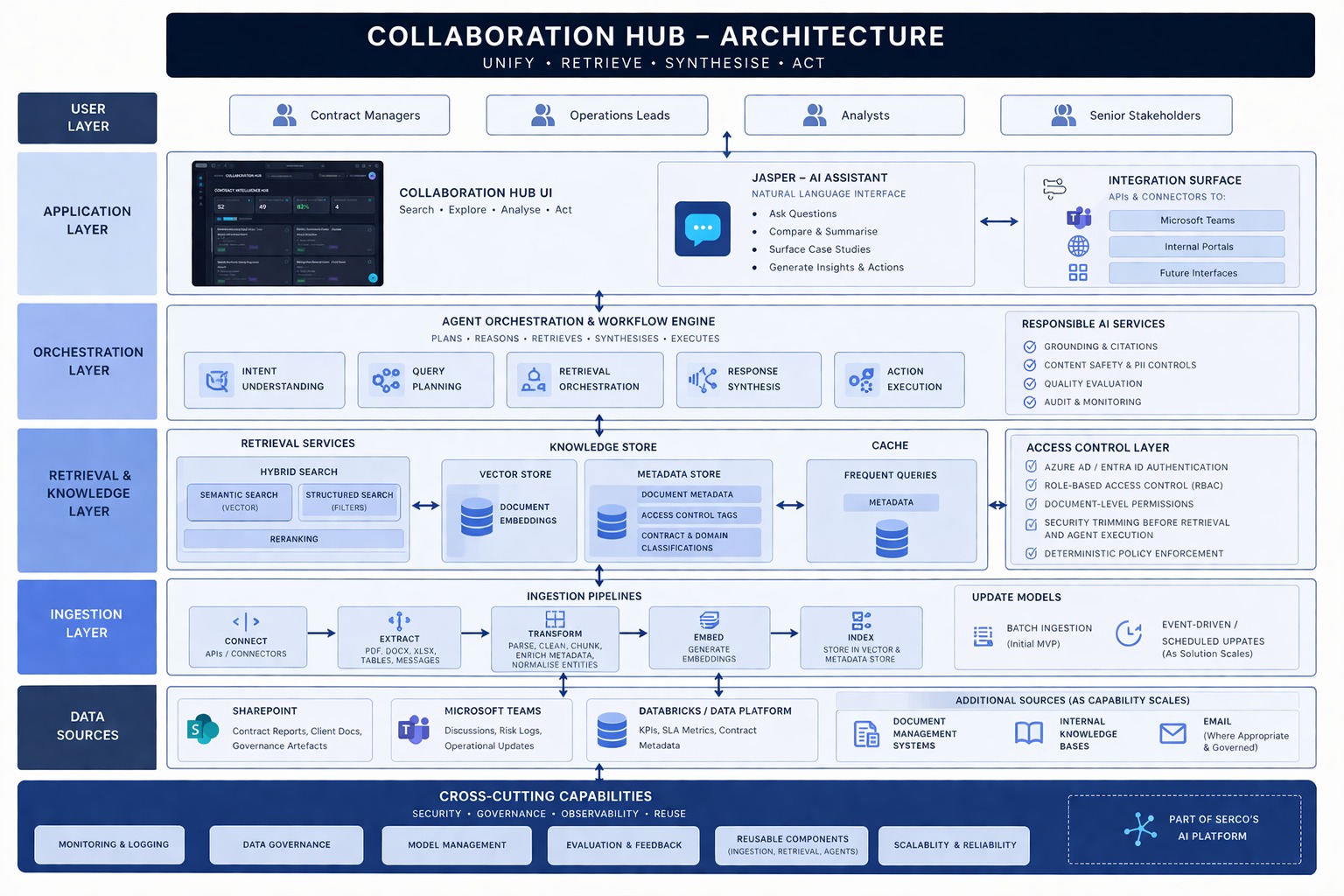

Step 4: Production Delivery & Scaling

We move directly from validated use case to controlled build.

Once approved, the use case enters delivery via the roadmap's "Now" horizon. The focus shifts from validation to execution.

Development begins with an MVP built on production-aligned architecture, governed data access, and reusable platform components. This is not a prototype — it is the foundation of a scalable solution.

The Build Readiness Pack feeds directly into delivery, generating structured engineering epics covering data, models, applications, security, and operational readiness. Teams can begin work immediately with clear scope and ownership.

Delivery progresses through three distinct stages:

MVP — Prove it works in reality

The solution is deployed to real users with real data. The objective is to demonstrate measurable value in a live context, not a simulated one.

Scaling — Prove it works reliably

Before wider rollout, the solution is validated with enterprise stakeholders (architecture, security, DPO, operations).

It is then scaled progressively using controlled release strategies. Performance, reliability, and adoption are monitored closely, with decisions to expand, refine, or halt based on evidence.

Scaling is addressed across two dimensions:

- Vertical scaling — increasing volume, performance, and reliability within the use case

- Horizontal scaling — extending the solution across users, business units, and additional use cases

This ensures solutions are not only technically robust, but capable of delivering value at enterprise scale.

Operate — Prove it continues to deliver value

The solution transitions into a managed product with defined service levels, monitoring, incident management, and clear ownership across teams.

All solutions are built using reusable, standardised components — including shared pipelines, integration patterns, and observability frameworks.

The same discipline applied in experimentation continues through delivery. If a solution does not demonstrate expected value, adoption, or performance, it is refined or stopped — not scaled.

Delivery Acceleration & Reusable Assets

Delivery is accelerated through a set of reusable assets embedded across each stage of the lifecycle. These are not standalone tools, but integrated components used during discovery, experimentation, and production delivery.

| Stage | Reusable Assets | Purpose |

|---|---|---|

| Discovery & Intake | Use Case Card template, structured intake framework | Standardises inputs, ensures consistent evaluation and prioritisation |

| Experiment Engine | Prompt templates, RAG prototypes, evaluation harness, Experiment Library | Rapidly test ideas using proven patterns and measurable criteria |

| Business Prototype | Low-code UI patterns, workflow templates, sample datasets | Quickly create interactive prototypes aligned to real workflows |

| Build Readiness | Build Readiness Pack template, architecture patterns | Translate validated ideas into delivery-ready specifications |

| MVP Build | Reference architectures, reusable pipelines, orchestration patterns | Accelerate development using production-aligned components |

| Scaling & Operate | Monitoring frameworks, logging standards, evaluation pipelines | Ensure reliability, performance, and continuous improvement in production |

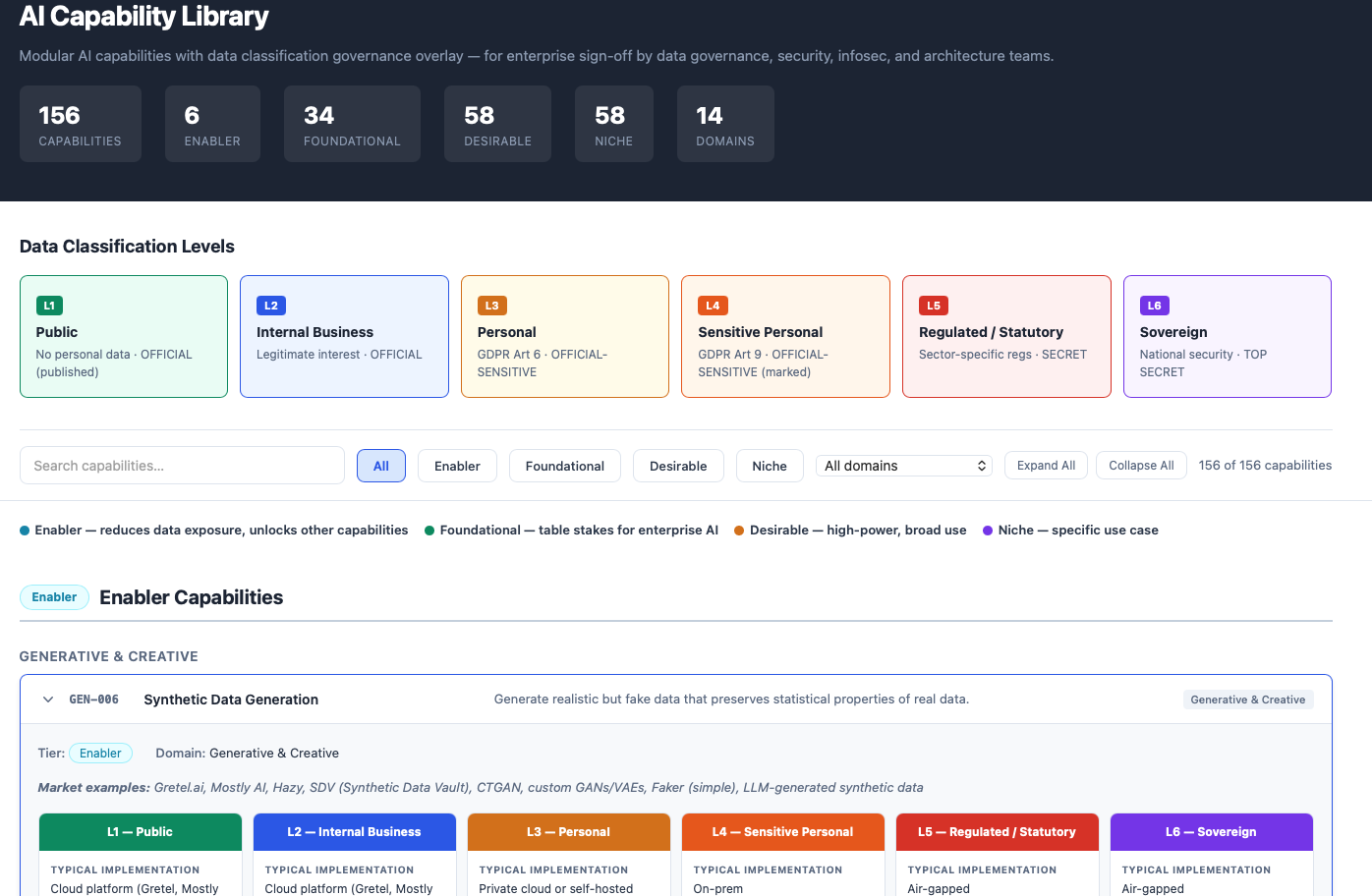

We don't prioritise use cases in isolation — we invest at the capability level.

While the Use Case Layer validates individual opportunities, the Portfolio Layer determines what to build and when across the estate. It brings all use cases into a single view, enabling informed, evidence-based investment decisions.

The objective is simple: maximise value by investing in the capabilities that unlock the most impact.

Map Demand Across Use Cases

Each validated use case defines a set of required capabilities — such as retrieval (RAG), classification, forecasting, or workflow automation.

When aggregated, these requirements create a clear, structured view of demand across the organisation. This allows us to identify common patterns, shared dependencies, and opportunities for reuse — shifting the focus from individual solutions to underlying capabilities.

Assess Current Capability Maturity

In parallel, we assess the current technology landscape — including existing AI solutions, data platforms, integrations, and infrastructure.

This is not a static inventory. Capabilities are evaluated for scalability, governance, and reusability, providing a clear view of:

- what can be leveraged

- what requires enhancement

- what is missing entirely

Capability Gap Analysis

By comparing demand with supply, we identify the capability gap — the set of capabilities that must be built, enhanced, or standardised to support the portfolio.

This reframes the investment question from:

"Which use case should we build next?" to: "Which capability unlocks the most value across multiple use cases?"

Feasibility is assessed at this level, considering dependencies such as data platforms, infrastructure, security, and governance.

Business Case Development & Value Bundling

Use cases are not progressed in isolation. As part of portfolio management, we translate prioritised opportunities into structured business cases, aligned to Serco's investment and governance processes.

Tiered Business Case Model:

- Now (Short-term) — Clear, near-term value using existing capabilities. Focused on quick wins, efficiency gains, and early adoption.

- Next (Medium-term) — Requires targeted capability investment (e.g. data readiness, integration, orchestration). Combines delivery of use cases with capability build.

- Later (Long-term) — Dependent on more advanced or emerging capabilities. Positioned as strategic opportunities, not immediate commitments.

Each business case includes:

- expected business outcomes (e.g. time saved, cost reduction, improved contract performance)

- delivery scope and dependencies

- required capability investments

- indicative cost vs value profile

- success metrics and adoption assumptions

Value Bundling Across Use Cases

Where multiple use cases rely on the same underlying capabilities, we group them into investment bundles rather than assessing them independently.

For example:

- A shared RAG capability may support reporting, risk identification, and knowledge access

- A shared workflow orchestration layer may enable multiple operational use cases

This allows capability costs to be amortised across multiple use cases, stronger more compelling business cases, and avoidance of duplicated investment.

Instead of funding isolated use cases, Serco invests in capabilities that unlock multiple outcomes.

Capability-Led Roadmapping

The portfolio layer continuously integrates new evidence from the use case layer — validated use cases, experiment results, capability maturity assessments — into an evolving, evidence-based roadmap.

Summary

This approach enables Serco to move beyond isolated AI initiatives and instead build a coherent, scalable AI capability. This end-to-end model delivers five outcomes:

- Systematic identification and prioritisation — Every AI opportunity is captured, assessed, and compared on the same basis. Nothing falls through the cracks. Investment goes where the evidence points.

- Validation before commitment — No use case reaches production without passing through structured experimentation and evidence-based decision gates. This protects against the most common AI failure mode: building something nobody needs.

- Scalable, production-ready delivery — Solutions are built on reusable components and shared infrastructure, not as isolated projects. Each use case strengthens the platform for the next one.

- Maximum return on capability investment — The Capabilities Library and Gap Analysis ensure that infrastructure investments are strategic — building Enabler capabilities that unlock the broadest set of future opportunities, not just solving one problem at a time.

- Internal capability, not external dependency — Knowledge transfer is embedded in every ceremony, every artifact, and every handoff point. The methodology is designed to be owned and operated internally. Our success is measured by whether you can run the next use case without us.